As the title suggests this article is about creating a desktop application for 2D cloth physics simulation using the Gio GUI library developed in Go. To get a taste in advance about what we are trying to build, below is a Gif image about the result.

Now, to understand the mechanism behind this kind of computer simulation, on the one end we should have some basics understandings about mathematical concepts such Euler equation, Newton’s law of motion and Verlet integration, but on the other end we should be able also to translate these theorems into computer programs.

This article is divided into the following sections:

- The basics of physics simulation

- Euler equation

- Verlet integration

- The cloth simulation

- A basic overview of the Gio GUI framework

- Translating the mathematical theorems into Go codes

The basics of physics simulation

As the name of this article suggests we will apply physics notions like force, mass, gravity, acceleration, speed, and velocity (just to name a few) specifically for cloth physics simulation, but the theorems which stands behind can be used on other objects also, and they can be the basis of a more advanced topic like rigid body simulation. Along the way we will explore how some basic concepts like constraints and joints, relative to the cloth simulation, are also common notions for 2d physics engines also.

Numerical integration

The core algorithm of the simulation is based on two concepts, known in physics as the Newton law’s of motion and restoration of force. These are expressed using continuous time steps, but in the computer simulation we need to use discrete time steps, which means that somehow, we should predict the new position of an object at each time step.

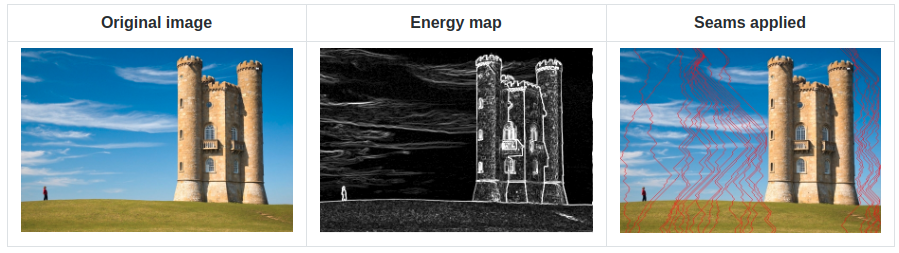

The foundation of every cloth simulation is the so-called numerical integration. There are many different techniques and implementation for physics simulation but, most of them use one of the three widespread integration method: Euler integration, Verlet integration and Runge-Kutta. The most significant difference between these methods consist in the accuracy of the movement.

The Euler integration

The Euler integration is best used on rigid body simulation because of its simplicity and performance, since it assumes that the shape of an objects never change or deform and it also gives a reasonable good approximation. But the problem is that it’s not accurate (unless we use an extremely small timestep (which we have no control over).

To calculate the movement of an object over time, we can use Euler integration in the following manner. Since each object has a mass and a force, by applying Newton’s law of motion results that: acceleration = force / mass. Now that we know the acceleration, we can update the object velocity and the position as follow:

This looks good, but the problem comes when accuracy comes into discussion. As we mentioned earlier, in computer simulation we should use discrete time steps, times which varies across CPU cycles. In our case deltaTime should never be a constant. This means that the result of such a numerical integration would never be precise and we cannot predict accurately the velocity and the movement of an object. Partially we could overcome this limitation by using an infinitesimal small time step, but another problem arises: the error is cumulative, which means the more we run the simulation the less accurate the movement will be. So, let’s jump into the Verlet integration.

Verlet integration

Verlet integration is very similar to Euler integration only this time we’ll get rid of the velocity component. We only need to calculate the change in position of an object (in our simulation we are using particles) by applying the Newton’s second law of motion: (F = mA), or force is equal to mass times acceleration. From this equation results that acceleration is equal to force over mass, or (A = F/m) which is a scalar component (the position and velocity are vectors). So, knowing the previous position and the acceleration, we can calculate the current position at the next frame.

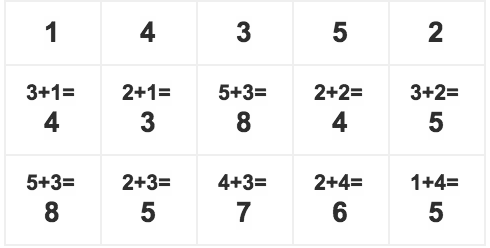

The Verlet integration formula is the following:

x(t+?t)=2x(t)?x(t??t)+a(t)?t2

We can translate this into the following code:

where the velocity component, compared to the Euler integration, is not calculated by the acceleration, but it’s a constant. Pretty much that’s all the Euler integration is about. Now, the fun part comes when we need to tweak the values like gravitation, dragging force, elasticity, friction, etc. We’ll come back to this later.

Now let’s move on and discuss about another key component of our system: constraints.

Constraints

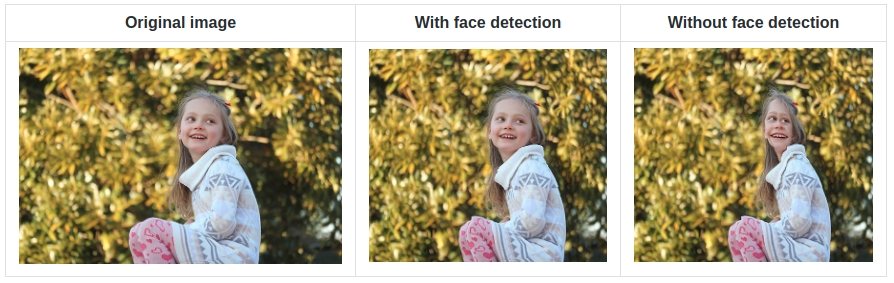

As I’ve mentioned earlier, the entities of our system are the particles. They are entities acting individually without having any constraints between each other’s. To have a complex and homogeneous system, where the components are acting together, we have to apply some kind of rules or constraints, which sticks together these individual components. In some simulations these are called sticks or springs, because they connect the particles to each other.

In Go there are no classes, so we should store the constraint components in a struct.

A particle obviously, above its current position, should also store its new position and the velocity in x and y dimension. These are mandatory, but along the way it can extended with other parameters like friction, elasticity and dragging force, or even more with information like if it’s pinned or active. The later one is important on a tearable cloth simulation. We get at that point shortly.

Now is coming the fun and most challenging part: simulating the cloth physics using the Verlet integration presented earlier.

The cloth simulation

This is the phase when we should put together all the individual pieces presented earlier. We will start by presenting the basic entity of our system: the cloth. As you might guess the cloth is constructed by the constraints and the particles they are acting upon. So, let’s start building up the cloth. As was the case with particles and constraints, we should store the cloth related information into a struct.

As you can see the Cloth struct stores the information related to the particles and constraints also. Now let’s initialize the cloth.

All particles are connected to each other except the ones from the first row and column. Why? Because the particles are joined with sticks from left to right and from top to down. The spacing component defines the distance between two particles, which is calculated based on the window width and height. The pinX variable tells if a particle should be pinned up or not. If this is true, then the particle will won’t fall down.

pinX := x % (clothX / 7) means that the cloth should have 7 pinned up points in the first row along the x coordinate. We store the particles and constraints in two separate slices. Now let’s see how the update function looks like. I think this is the proper time to introduce the Gio event system.

Introducing the Gio GUI library

Gio is cross platform event driven, immediate mode GUI library which supports all the major platforms (even Webassembly). You can check the project page at https://gioui.org/. Now let’s see how we can create a basic desktop application with Gio.

A Go developer should recognize this pattern instantly. The application is running inside a goroutine which blocks until some external events are triggered, like a system close or destroy event. Now let’s analyze the loop() function a little bit.

For the sake of simplicity and understandability I have omitted many of the details of the cloth simulation and kept the most relevant part only. You can see that there is a infinite for loop which runs until the application is closed, either by clicking on the close button or by pressing the Escape key. On each window frame event the cloth is updated. Now let’s analyze the Update function.

// Update is invoked on each frame event of the Gio internal window calls.

// It updates the cloth particles, which are the basic entities over the

// cloth constraints are applied and solved using Verlet integration.

func (cloth *Cloth) Update(gtx layout.Context, delta float64) {

for _, p := range cloth.particles {

p.Update(gtx, delta)

}

for _, c := range cloth.constraints {

if c.p1.isActive && c.p2.isActive {

c.Update(gtx, cloth)

}

}

var path clip.Path

path.Begin(gtx.Ops)

// For performance reasons we draw the sticks as a single clip path instead of multiple clips paths.

// The performance improvement is considerable compared of drawing each clip path separately.

for _, c := range cloth.constraints {

if c.p1.isActive && c.p2.isActive {

path.MoveTo(f32.Pt(float32(c.p1.x), float32(c.p1.y)))

path.LineTo(f32.Pt(float32(c.p2.x), float32(c.p2.y)))

path.LineTo(f32.Pt(float32(c.p2.x), float32(c.p2.y)).Add(f32.Point{X: 1.2}))

path.LineTo(f32.Pt(float32(c.p1.x), float32(c.p1.y)).Add(f32.Point{X: 1.2}))

path.Close()

}

}

// We are using `clip.Outline` instead of `clip.Stroke`, because the performance gains

// are much better, but we need to draw the full outline of the stroke.

paint.FillShape(gtx.Ops, cloth.color, clip.Outline{

Path: path.End(),

}.Op())

path.Begin(gtx.Ops)

for _, c := range cloth.constraints {

if c.p1.isActive && c.p2.isActive {

path.MoveTo(f32.Pt(float32(c.p1.x), float32(c.p1.y)))

path.LineTo(f32.Pt(float32(c.p2.x), float32(c.p2.y)))

path.LineTo(f32.Pt(float32(c.p2.x), float32(c.p2.y)).Add(f32.Point{Y: 1.2}))

path.LineTo(f32.Pt(float32(c.p1.x), float32(c.p1.y)).Add(f32.Point{Y: 1.2}))

path.Close()

}

}

paint.FillShape(gtx.Ops, cloth.color, clip.Outline{

Path: path.End(),

}.Op())

// Here we are drawing the mouse focus area in a separate clip path,

// because the color used for highlighting the selected area

// should be different than the cloth’s default color.

for _, c := range cloth.constraints {

if (c.p1.isActive && c.p1.highlighted) &&

(c.p2.isActive && c.p2.highlighted) {

c.color = color.NRGBA{R: col.R, A: col.A}

path.Begin(gtx.Ops)

path.MoveTo(f32.Pt(float32(c.p1.x), float32(c.p1.y)))

path.LineTo(f32.Pt(float32(c.p2.x), float32(c.p2.y)))

path.LineTo(f32.Pt(float32(c.p2.x), float32(c.p2.y)).Add(f32.Point{X: 1}))

path.LineTo(f32.Pt(float32(c.p1.x), float32(c.p1.y)).Add(f32.Point{X: 1}))

path.Close()

path.MoveTo(f32.Pt(float32(c.p1.x), float32(c.p1.y)))

path.LineTo(f32.Pt(float32(c.p2.x), float32(c.p2.y)))

path.LineTo(f32.Pt(float32(c.p2.x), float32(c.p2.y)).Add(f32.Point{Y: 1}))

path.LineTo(f32.Pt(float32(c.p1.x), float32(c.p1.y)).Add(f32.Point{Y: 1}))

path.Close()

paint.FillShape(gtx.Ops, c.color, clip.Outline{

Path: path.End(),

}.Op())

}

}

}

The two separate for…range loop is a little bit cumbersome, but unfortunately there is bug on the Gio path renderer, which creates some unwanted contoured artifacts on the drawn lines. An issue has been filed on https://todo.sr.ht/~eliasnaur/gio/474 and hopefully it will be fixed soon.

Updating the cloth

There are two things happening in the Update function: on the one hand we are updating the particles and the constraints using the Verlet integration presented earlier, and on the other hand we are drawing the cloth sticks using some of Gio’s drawing methods. We are updating the constraints only if the particles they are acting upon are active. This is an efficient way to simulate tearing apart a cloth or to make a hole in the cloth structure, because in the end if some segments in the cloth structure is broken or the connection between certain particle is lost, we can simply omit to update the constraints.

// With right click we can tear up the cloth at the mouse position.

if mouse.getRightButton() {

if dist < float64(focusArea) {

p.isActive = false

}

}

These are some of the basic components of making a cloth simulation, but the application can be extended way further, like adjusting the mouse influence or the mouse dragging force, tearing apart the cloth based on the intensity applied with the mouse over a cloth region, or even adding a control panel for adjusting certain variables on the fly. And the list can continue. We are not going to cover all of the above-mentioned ideas, but you can check the source code on the project Github page: https://github.com/esimov/cloth-physics

---

Final thoughts

I think that's all I wanted to share, so it's time to sum up in a few words the conclusion. For me making a 2D cloth simulation was a fun way to apply some of the fundamental theorems of algebra, like integration, differentiations and calculus to a computer program.

As a matter of the Gio library it's still under development, so it doesn't have a stable API yet, resulting in broken functionalities from one release to another. But that's fine, we should acknowledge that it's an ongoing project. What's cool about it is the performance, it's really fast and stable. It never happened to me to crash unexpectedly, or to panic. It has some bugs and issues, but nothing serious. Considering that Gio hasn't been tagged with a stable Semver version it's in a quite good shape.

If you like this article and have some questions, do not hesitate to contact and follow me on Twitter. You can also follow me on Github to receive information and updates about what I'm working on. Also if you want to try out the application, you can download or install it from the project Github page: https://github.com/esimov/cloth-physics and star if you like it.

Links and references:

https://github.com/esimov/cloth-physics

https://gioui.org/

https://jonegil.github.io/gui-with-gio/?-?a gentle introduction to Gio.

https://twitter.com/simo_endre