In the last couple of occasions I wrote about the Pigo face detection library I have developed in Go. This article is another one from the series, but this time I’m focusing on WASM (Webassambly) integration. This is another key milestone in the library evolution, considering that when I started this project the library was capable only for face detection. Later on the library has been extended to support pupils/eyes localization, then facial landmark points detection and it has also been adapted to be integrated into different programming languages as a shared object library (SO). I’m pretty delighted about the great acceptance and support received during the development from the programming community, the library being featured a couple of times in https://golangweekly.com/, received 2.5k stars on the repo Github page (and still counting), getting many positive feedback on Reddit, which means it payed back the efforts.

But first what is WASM? To quote the https://webassembly.org/ homepage:

WebAssembly (abbreviated Wasm) is a binary instruction format for a stack-based virtual machine. Wasm is designed as a portable target for compilation of high-level languages like C/C++/Rust, enabling deployment on the web for client and server applications.

In other words this means that compiling and porting a native application to the WASM standard will give the generated web application a speed almost equal to the native one.

Starting from v1.11 an experimental support for WASM has been included into the Go language, which has been extended on v1.12 and v1.13. As I mentioned in the previous articles Go suffers terrible in terms of native and platform agnostic webcam support and as of my knowledge currently there is no single webcam library in the Go ecosystem which is platform independent. This was the reason why, to prove the library real time face detection capabilities, I opted to lean on exporting the main function as a shared object library, but lately this proved to be inefficient in terms of real time performance, since on each frame the Python app had to transfer the pixel array to the Go app (where the face detection operation is happening) and get back the detected faces coordinates. Of course because of the two way (back and forth) communication, this process fall back considerably in terms of pure performance.

WASM to the rescue

As I mentioned above starting from v1.11 the standard Go code base now includes the syscall/js package which targets WASM. However the API has been refactored and gone trough a few iterations to became stable as of v1.13. This also means that the WASM API of v1.13 is no more compatible with the v1.11. The API became so mature that there is no need to use external libraries targeting the Javascript runtime in Go, like Gopherjs.

In order to compile for Webassambly we need to explicitly specify and set the GOOS=js and GOARCH=wasm environment variables on the building process. Running the below command will build the package and produce a .wasm executable Webassambly module file.

$ GOOS=js GOARCH=wasm go build -o lib.wasm wasm.go

This file then can be referenced in the main html file.

That’s the only thing we need to do in order to have fully functional WASM application. The hardest part is coming afterwards. When we are targeting WASM there are a few takeaways we need to keep in mind in order the implementation to run as smooth as possible:

- To have access to a Javascript global variable in Go we have to call the js.Global() function.

- To call a JS object method we have to use the Call() function.

- To get or set an attribute of a JS object or Html element we can call the Get() or Set() functions.

- Probably the trickiest part of the Go Wasm port is related to the callback functions, and there are a lot of places where we need to take care of them. One of the most important one is the canvas requestAnimationFrame method, which accepts as a second argument a callback function. Now to invoke this method in Go we need to apply to the js.FuncOf(func(this js.Value, args []js.Value) interface{}{...} function, where the second argument is the callback function argument.

A very important note: the function body should be called inside a goroutine, otherwise you’ll get a deadlock runtime exception. You can check the package documentation here: https://godoc.org/syscall/js

The implementation details

Now let’s take a deep breath and roll into the implementation details. One of the key components of the face detection application is the binary cascade file parser. Since in normal circumstances, when we are fetching and parsing the binary files locally, we can rely on the os system package, this is not the case on WASM, because we do not have access to the system environment. This means that we cannot load and parse the files sitting on our local storage. We need to fetch them through the supported Javascript methods like the fetch method. In Javascript this method returns a promise with two arguments: a success and a failure. As I mentioned previously the callback functions needs to be invoked in separate goroutines and the most straightforward way to orchestrate the results when we are dealing with goroutines is to use channels. So the fetch method should return the parsed binary file as a byte array and an error in case of a failure.

The rest of the implementation does not differ in anything from the Unpack() method presented in the previous article. Once we get binary array there is nothing left over just to unpack the cascade file, transform it to the desired shape and extract the relevant information.

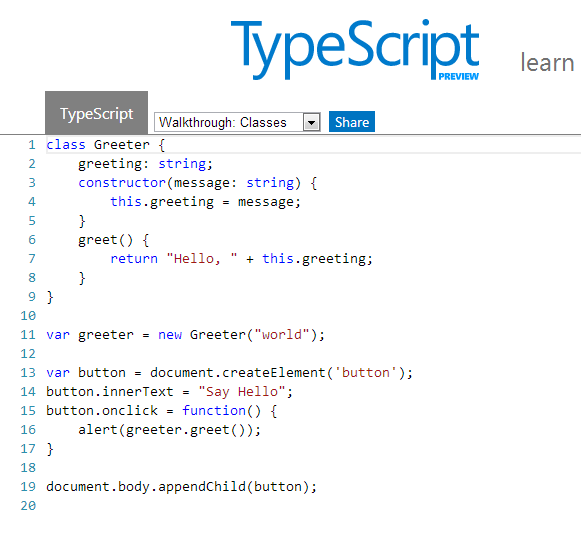

I won’t go into too much details about the detection algorithm itself, since I’ve discussed it in the previous articles. I will detail what’s most important in the perspective of the WASM integration. Another key importance part in the WASM integration is related to the webcam rendering operation. In Javascript we can access the webcam in the following way:

We can translate this snippet to Go (WASM) code in the following way:

This will return a canvas object (struct) over it we can call the rendering method itself. In the background this will trigger the requestAnimationFrame JS method, drawing each webcam frame to a dynamically created image element. From there we can extract the pixel values calling the getImageData HTML5 canvas method. Since this will return an object of type ImageData which values are of Uint8ClampedArray, these needs to be converted to a type supported by the Go WASM API. The only supported uint type in the Go syscall/js API is uint8, so the most suitable way is to convert the Uint8ClampedArray to Uint8Array. We can do this by calling the following method:

The code below shows the rendering method. It’s a pretty common Javascript code adapted to WASM Go, including the code responsible for the face detection, but this have been discussed in the previous articles. The most important part is the type conversion, without that we are just getting a compiler error.

And to put all together, in the main function we are just calling the webcam Render() method, once the webcam has been initialized, otherwise an alert telling that the webcam was not detected.

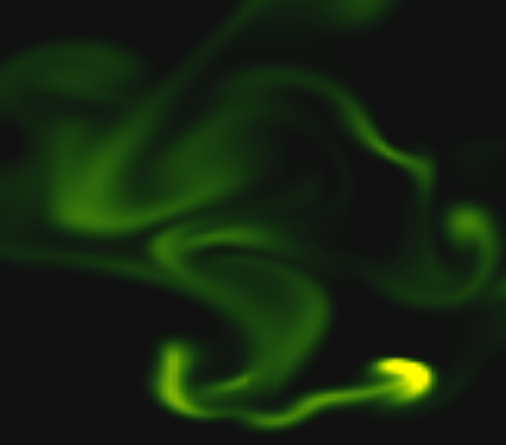

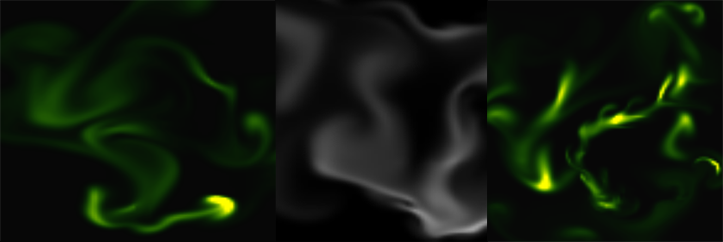

The end result

Voila, we are done. Here is how the Webassambly version of Pigo looks like in realtime. Pretty good, huh? That’s, all, thanks for reading and if you like my works you can follow me on Twitter or give it a star on the project Github repo: https://github.com/esimov/pigo.

Look at this! Can OpenCV beat this speed? Pigo has been ported to #webassembly ??, which gains him a huge speed improvements in terms of real time performance.

This proves that Pigo is capable running real time, which was not quite obvious until now. #golang #wasm #javascript pic.twitter.com/yeKir9K3YC

— Endre Simo (@simo_endre) November 18, 2019